US: Pentagon gives ultimatum to Anthropic over AI curbs — report

Deutsche Welle • 2/14/2026 – 2/25/2026

Summary

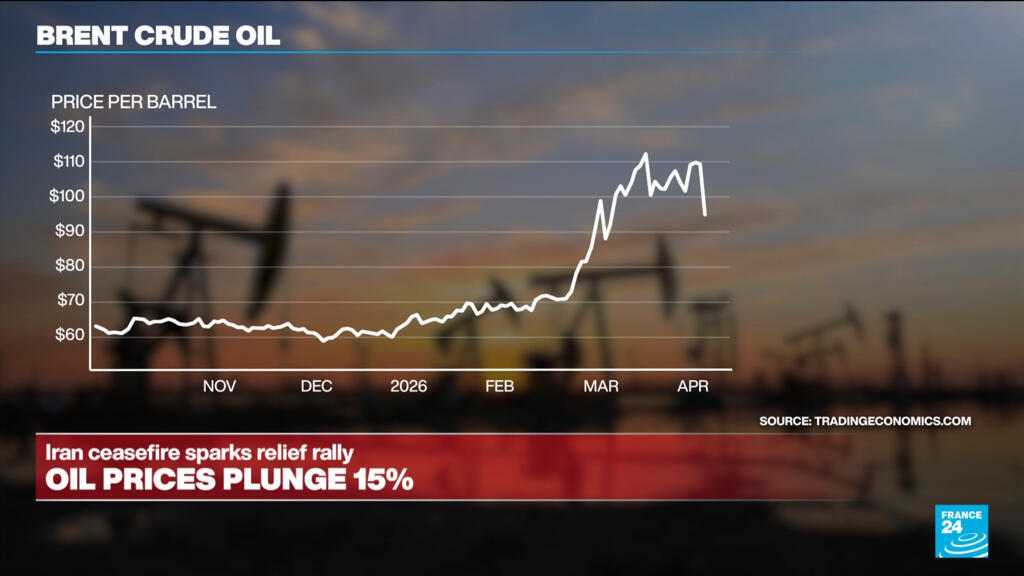

US Defense Secretary Pete Hegseth has reportedly given AI company Anthropic until Friday to agree to allow its technology to be used for military applications. This ultimatum comes amid ongoing tensions between the Pentagon and Anthropic regarding the use of its AI model, Claude, particularly in military operations. The Pentagon has expressed frustrations over Anthropic's restrictions on the use of its AI technology, which explicitly prohibit applications for violent purposes, weapon development, or surveillance activities (France24, The Guardian). The Wall Street Journal reported that the Pentagon utilized Anthropic's AI model, Claude, during a military operation aimed at capturing Venezuelan President Nicolás Maduro in Caracas, resulting in the deaths of 83 individuals, according to Venezuela’s defense ministry. This operation has raised ethical concerns, as it contradicts Anthropic's terms of use. Currently, the Pentagon is negotiating with four AI companies, including Anthropic, to allow military use of their tools for "all lawful purposes." However, discussions regarding the extension of their contract have stalled due to additional safeguards that Anthropic wishes to implement on Claude, which are intended to prevent its use for contentious applications such as mass domestic surveillance and autonomous weaponry (TechCrunch, South China Morning Post). If the contract discussions do not progress, the Pentagon may designate Anthropic as a supply chain risk. Hegseth has threatened to blacklist Anthropic over concerns related to "woke AI." The ongoing negotiations highlight the challenges faced by military and AI developers in balancing operational requirements with ethical considerations surrounding AI technology (NPR, Military Times).

Advertisement

Cluster Activity

Lindy Score Breakdown (V4.2)

Story Timeline

- 2026-02-14

- 2026-02-15

- 2026-02-16

- 2026-02-20

- 2026-02-21

- 2026-02-23

- 2026-02-24

- 2026-02-25US: Pentagon gives ultimatum to Anthropic over AI curbs — report (current)

Score BreakdownRisk 25

Stories gain Lindy status through source reputation, network consensus, and time survival.

Same Story from 11 sources

Breaking Similar stories

Anti-Lindy Similar stories