New method could increase LLM training efficiency

MIT News • 2/26/2026

Summary

Researchers have developed a new method that could double the speed of large language model (LLM) training by leveraging idle computing time. This approach maintains the accuracy of the models during the training process.

Advertisement

Breaking Similar stories

Flying at the edge of the stratosphere

MIT News • 1 day ago

Steve DiBenedetto’s Cosmic Sense of the Absurd

3 Quarks Daily • 1 day ago

Jed Perl: “Morgan Meis’s Three Paintings Trilogy is the most exciting new writing about the visual arts to appear in a generation”

3 Quarks Daily • 1 day ago

The Vulnerability Of The Liberal Neutral State

Noema Magazine • 1 day ago

To Work for Us, AI Must Not Think for Us

Project Syndicate • 2 days ago

The FTC's Probe of Media Matters for America Is a Blatant Assault on Freedom of Speech

Reason Magazine • Today

State vs. Local, State vs. State

Reason Magazine • 1 day ago

Abundance Pragmatism Fails

Law & Liberty • Today

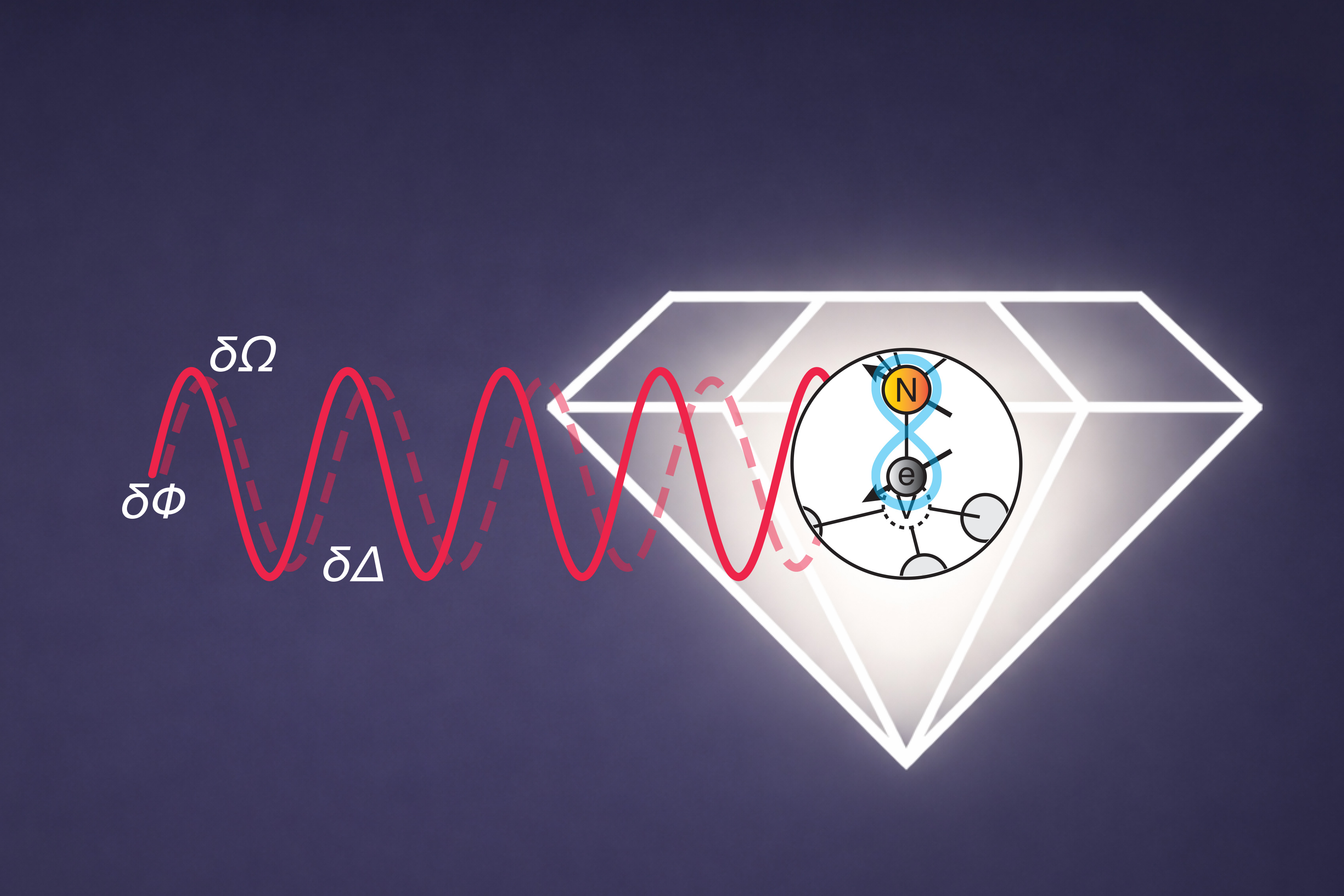

Multitasking quantum sensors can measure several properties at once

MIT News • Today

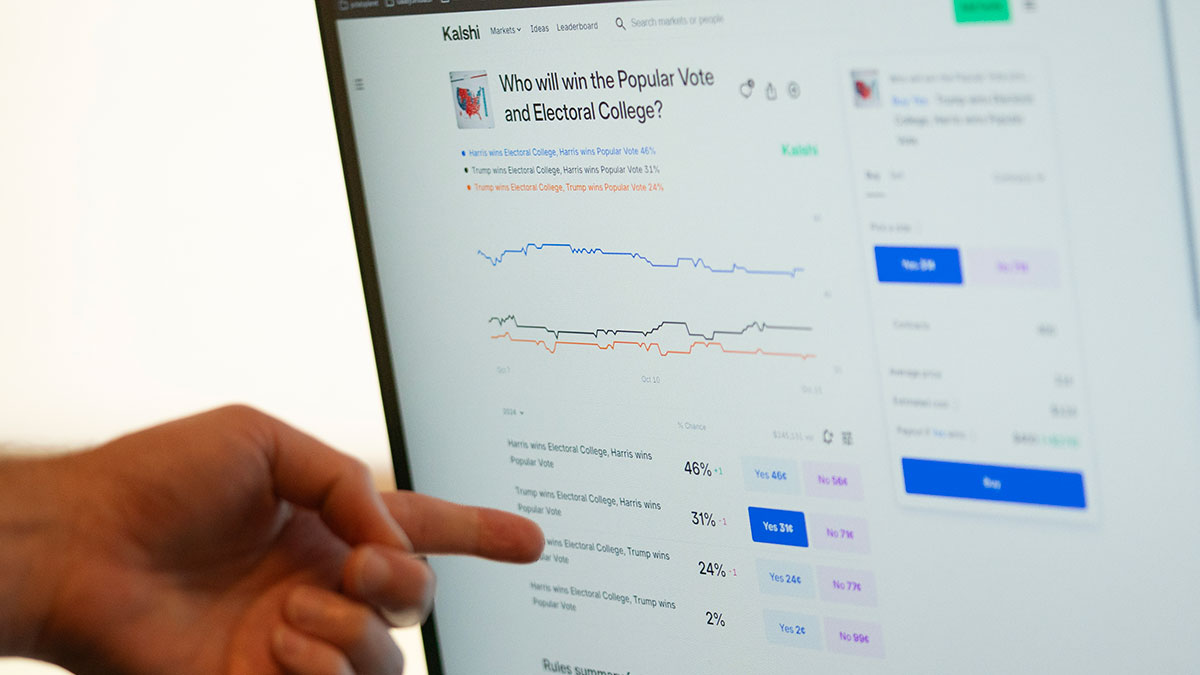

Are Prediction Markets Gambling?

City Journal • Today

Survived Similar stories

Anti-Lindy Similar stories

Louisiana Requires 500 Hours of Training To Braid Hair Professionally. This Bill Would Increase It.

Reason Magazine • 1+ months

Chip-processing method could assist cryptography schemes to keep data secure

MIT News • 1+ months

Sign Up For Advancing American Freedom's Judicial Clerkship Training Academy

Reason Magazine • 3+ months

Mamdani To Increase NYC Property Taxes by 9.5 Percent To Balance Budget if Income Taxes Are Not Raised

Reason Magazine • 1+ months

Europe Must Make AI Firms Pay for Training Data

Project Syndicate • 2+ months

A better method for identifying overconfident large language models

MIT News • 3 weeks ago

Escaping the Trap of Efficiency: The Counterintuitive Antidote to the Time-Anxiety That Haunts and Hampers Our Search for Meaning

The Marginalian • 1+ months

UNC Newspaper Halts Satire and Implements DEI Training After Backlash Over April Fools' Issue

Reason Magazine • 5 days ago

‘Milestone’ research method measures gene activity across whole mice

Science Magazine • 2 weeks ago

A better method for planning complex visual tasks

MIT News • 1+ months